Hi, I'm

Delphin Kaduli

I build end-to-end machine learning systems and automated data platforms that optimize operations and protect financial infrastructure from risk. I design robust models engineered for production cloud deploymen, not just a notebook.

Available for hire

About Me

I started by trying to understand how systems behave. What began as curiosity about patterns and signals grew into a lifelong commitment to building technical solutions that deliver absolute clarity and automated confidence.

Today, my primary focus centers on fraud detection, risk analytics, and the modern data architectures that power them. I build for production, not just a notebook, ensuring clean pipelines, optimized features, and resilient model deployments. My engineering discipline is grounded in a mindset shaped by faith, family, and movement. I truly believe that exceptionally designed systems outperform isolated efforts, and I bring that collaborative, mission-driven approach to every team.

Looking ahead, I am driven by one foundational question: Does this system help someone make a better call? That is the standard of excellence I build toward from data ingestion and automated validation to hyperparameter optimization and cloud deployment.

Projects

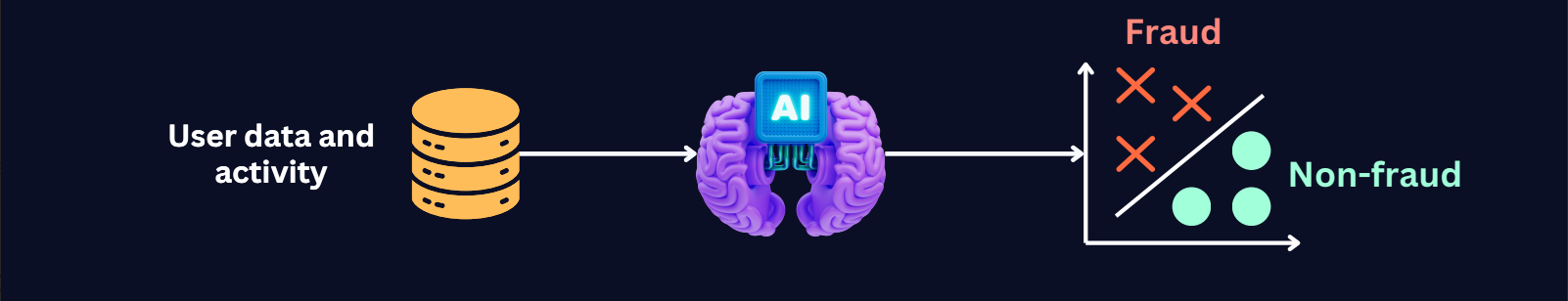

My work focuses on the intersection of Fraud Detection, Risk Analytics, Predictive Systems, and Data Engineering.

Fraud Detection System

Delivered 0.93 Precision and 0.82 Recall on a 0.17% imbalanced dataset, ensuring 93% of flagged transactions are confirmed fraud to mitigate financial loss.

Demand Forecasting System

Achieved a 51% MAE reduction by developing a real-time demand forecasting system on AWS to optimize dynamic pricing and fleet utilization.

Metro Transit Analytics

Engineered a production-ready Medallion data platform using Apache Airflow and PostgreSQL to process 1M+ real-time transit records, maintaining a 76.1% data yield across 8 automated quality gates.

Technical Skills

Tools and technologies I use to build production-ready data systems

Python (Pandas, NumPy, Polars)

SQL (CTEs, Window Functions, Optimization)

ETL Pipelines (Airflow, Medallion Architecture)

Data Quality, Validation & Monitoring

Power BI & Tableau (DAX, Dashboards)

Data Warehousing (Snowflake, PostgreSQL)

Core Tech Stack

Python

SQL

PostgreSQL

Snowflake

AWS

Airflow

Docker

Scikit-learn

MLflow

FastAPI

Streamlit

Power BI

GitHub

Tableau

NumPy

Pandas

Experience

Professional Impact & Results

Data Scientist & Research Analyst

The Catholic University of America

Jan 2024 - Dec 2025

Contract / Research Fellow

Experience.experienceDesc1

Data Infrastructure Engineer

Women of Faith

Jul 2021 - Dec 2023

Full-time

Experience.experienceDesc2

What I'm Learning Now

Continuous growth in production ML and data engineering

Deep Learning & NLP

Building neural networks for text classification, sentiment analysis, and language understanding with PyTorch and Transformers.

Model Monitoring & Drift Detection

Implementing drift alerts, performance dashboards, and automated retraining triggers.

Feature Stores & Real-Time ML

Exploring Feast and online feature pipelines for fraud detection and low-latency scoring.

AWS Step Functions & Event-Driven Pipelines

Designing serverless workflows that orchestrate ML systems with reliability and traceability.

Advanced Airflow Patterns

Building more resilient DAGs, improving observability, and applying data quality checks at scale.

Contact Me

Let's build something Amazing